to sense how others are responding to us.

You can’t sense hesitation.

You can’t feel resistance.

You can’t detect disengagement until it’s too late.

So we keep talking.

Hoping it’s going well.

As a result…

Momentum is lost.

Customers feel unappreciated.

Employees feel ignored.

Teams drift apart.

Deals don’t close.

Commitments fade after the call.

The most important layer of communication — human signal awareness — disappears the moment the meeting goes virtual.

Installed locally on your device, Unfair Intel will interpret emotional signals, engagement, hesitation, and reactions in real time — turning them into private, goal-driven prompts visible only to you.

You’ll know what to say and what to do to re-align with your audience while the meeting is happening — when decisions are being made.

This new communication layer isn’t a question of if.

It’s a question of who builds it first.

Here’s what it will look like in a real meeting (1:27)…

We’re inviting a small group of leaders and builders to help shape the first version before public launch.

They’re helping bring a new communication layer into existence.

🚀 Join as an Early Access Leader

Get priority access, shape the first version, and move up the waitlist through aligned referrals.

🛠️ Join as an Early Access Developer / Builder

Help build the first real-time behavioral intelligence platform for virtual meetings. We’re assembling the formational technical team.

You’ll receive your personal referral link after signup.

The more aligned people you invite, the earlier your access.

Your feedback will directly shape the first real-time behavioral intelligence interface ever built.

If you’re joining as an Early Access Developer / Builder

You’ll receive early technical updates, opportunities to contribute, and recognition as a Founding Builder helping bring this new communication layer to life.

You may have the opportunity to contribute to the first real-time human-signal intelligence engine.

Founding early access members will be credited on the launch page as early contributors to the category.

The missing human layer of communication is becoming visible again.

Why this new communication layer is inevitable

Remote work didn’t just change where we meet. It removed the human signal layer that trust, alignment, and decisions depend on.

Why This Matters Now

- Today we are all virtually connected — but human emotional intelligence hasn’t followed us into the screen.

- In high-stakes virtual conversations, the moment-to-moment signals that drive trust, alignment, and decisions are largely invisible.

- AI can now interpret those missing human signals in real time and return them privately to the person who needs them most.

- This is the next evolution of communication — the moment technology begins restoring the human layer that video calls removed.

How It Works (in 30 seconds)

1. Start your meeting. Set your goals.

Before the call begins, choose your intention — Alignment, Persuasion, Closure, Reassurance, Coaching, or Conflict Resolution.

2. Read the room in real time.

AI interprets facial signals, vocal tone, micro-expressions, engagement, hesitation, and reactions as the meeting unfolds.

3. Receive private prompts.

Get discreet, goal-driven guidance telling you what to say or do next — while decisions are being made.

Built for Performance. Designed for Privacy.

Unfair Intel will run locally on your device and will be grounded in validated emotional-science frameworks and modern multimodal AI. Signals will be interpreted in real time and returned privately to you.

No public overlays. No automatic cloud recording. User-controlled consent.

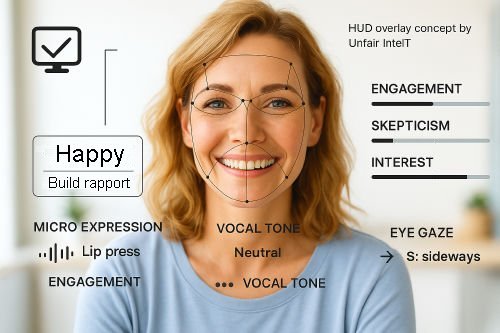

Example HUD concepts — final interface in development.

What You’re Seeing

- 👁️ Micro-expressions and facial signals

Subtle changes in expression that reveal comfort, tension, interest, or hesitation. - 🎙️ Vocal tone and speech patterns

Changes in pace, clarity, confidence, and emotional tone. - 💬 Engagement and attention

Real-time signals showing when focus increases, drops, or shifts. - 🎯 Adaptive prompts

Discreet guidance suggesting when to pause, ask questions, reassure, or redirect. - 🧠 Emotion and intent classification

Patterns detected across signals to estimate the emotional direction of the conversation. - ⚙️ Real-time refinement

Guidance updates continuously as the meeting evolves. - 🔒 Private, on-device processing

Signals are interpreted locally and remain visible only to you.

What Unfair Intel Analyzes

1) Visual Behavioral Signals

- Facial expressions & micro-expressions

- Eye behavior (blink rate, gaze direction)

- Posture shifts & body language

- Head movements & hand gestures

- Facial symmetry changes (skepticism, doubt, concern)

- Camera distance & positioning (comfort, formality, confidence)

2) Vocal & Tonal Signals

- Vocal tone (pitch, warmth, intensity)

- Speech rate & rhythm

- Pauses & silences

- Loudness variation (defensiveness, excitement, hesitation)

3) Contextual & Interaction Signals

- Response latency (hesitation, certainty, readiness)

- Engagement patterns (speaking turns, interruptions, energy)

- Chat & text sentiment

How Unfair Intel Uses These Signals

Unfair Intel will fuse visual, vocal, and behavioral cues using multimodal AI — grounded in modern emotional-intelligence research — and translate them into real-time, goal-driven guidance that helps you lead the room with clarity and confidence.

What You Get

- Clarity — See emotional shifts, resistance, and buy-in before they show up in words.

- Control — Private, real-time prompts suggest what to say or do next to move toward your goal.

- Confidence — Stop reacting. Stop guessing. Start acting and responding with emotional clarity and precision.

The ability to read the room shouldn’t disappear online.

We’re rebuilding the missing human layer of communication for virtual meetings. Join early access and help shape what comes next.

Join The Early Access ListThe first 100 Early Access Leaders who bring aligned peers into the movement — and the first 100 Early Access Developers / Builders who help shape the build — will be recognised publicly at launch.

This isn’t about volume. It’s about alignment.